ARS-111GL-NHR

NVIDIA GH200 Grace Hopper Superchip GPU Server supporting NVIDIA BlueField-3 or NVIDIA ConnectX-7

This system currently supports two E1.S drives direct to the processor and the onboard GPU only.

- High density 1U GPU system with Integrated NVIDIA® H100 GPU

- NVIDIA Grace Hopper™ Superchip (Grace CPU and H100 GPU)

- NVLink® Chip-2-Chip (C2C) high-bandwidth, low-latency interconnect between CPU and GPU at 900G

Key Applications

- High Performance Computing

- AI/Deep Learning Training and Inference

- Large Language Model (LLM) and Generative AI

| Product SKUs | ARS-111GL-NHR |

| Motherboard | Super G1SMH-G |

| Processor | |

| CPU | NVIDIA 72-core NVIDIA Grace CPU on GH200 Grace Hopper™ Superchip |

| Core Count | Up to 72C/144T |

| Note | NVIDIA 72-core NVIDIA Grace CPU on GH200 Grace Hopper™ Superchip |

| GPU | |

| Max GPU Count | 1 onboard GPU(s) |

| Supported GPU | NVIDIA: H100 Tensor Core GPU on GH200 Grace Hopper™ Superchip (Air-cooled) |

| CPU-GPU Interconnect | NVLink®-C2C |

| GPU-GPU Interconnect | PCIe |

| System Memory | |

| Memory | Slot Count: Onboard Memory Max Memory: Up to 480GB ECC LPDDR5X Additional GPU Memory: Up to 96GB ECC HBM3 |

| On-Board Devices | |

| Chipset | System on Chip |

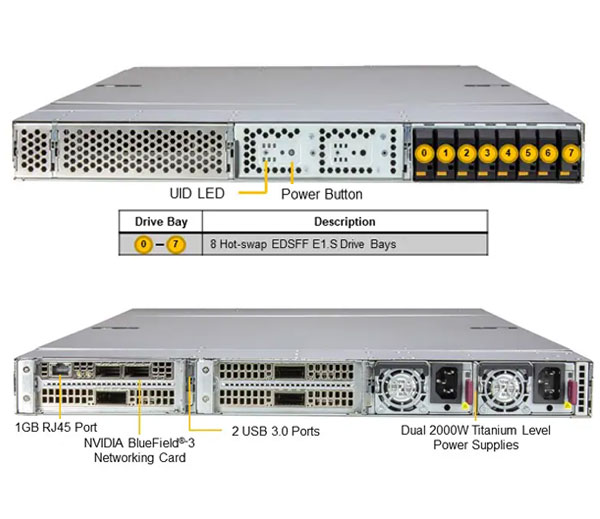

| Network Connectivity | 1x 1GbE BaseT with NVIDIA ConnectX®-7 or Bluefield®-3 DPU |

| Input / Output | |

| LAN | 1 RJ45 1GbE (Dedicated IPMI port) |

| USB | 2 USB port(s) (2 rear) |

| System BIOS | |

| BIOS Type | AMI 32MB SPI Flash EEPROM |

| PC Health Monitoring | |

| CPU | 8+4 Phase-switching voltage regulator Monitors for CPU Cores, Chipset Voltages, Memory |

| FAN | Fans with tachometer monitoring Pulse Width Modulated (PWM) fan connectors Status monitor for speed control |

| Temperature | Monitoring for CPU and chassis environment Thermal Control for fan connectors |

| Chassis | |

| Form Factor | 1U Rackmount |

| Model | CSE-GP102TS-R000NDFP |

| Dimensions and Weight | |

| Height | 1.75" (44mm) |

| Width | 17.33" (440mm) |

| Depth | 37" (940mm) |

| Package | 9.5" (H) x 48" (W) x 28" (D) |

| Weight | Net Weight: 48.5 lbs (22 kg) Gross Weight: 65.5 lbs (29.7 kg) |

| Available Color | Silver |

| Expansion Slots | |

| PCI-Express (PCIe) | 3 PCIe 5.0 x16 FHFL slot(s) |

| Drive Bays / Storage | |

| Hot-swap | 8x E1.S hot-swap NVMe drive slots |

| M.2 | 2 M.2 NVMe |

| System Cooling | |

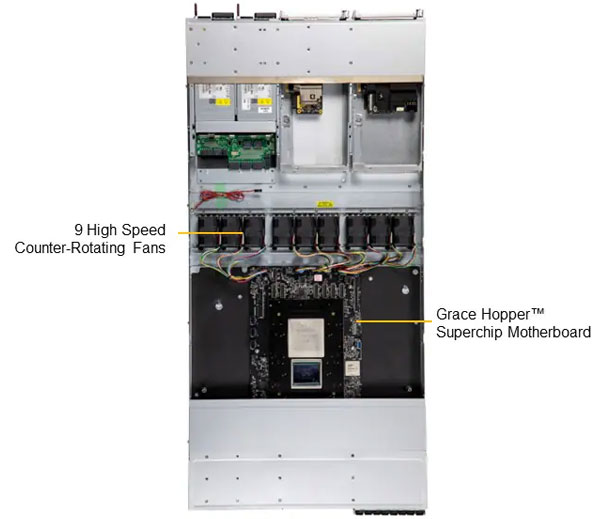

| Fans | 9 Removable heavy-duty 4CM Fan(s) |

| Power Supply | 2x 2000W Redundant Titanium Level power supplies |

| Dimension (W x H x L) | 73.5 x 40 x 185 mm |

| AC Input | 1000W: 100-127Vac / 50-60Hz 1800W: 200-220Vac / 50-60Hz 1980W: 220-230Vac / 50-60Hz 2000W: 220-240Vac / 50-60Hz (for UL only) 2000W: 230-240Vac / 50-60Hz 2000W: 230-240Vdc / 50-60Hz (for CQC only) |

| +12V | Max: 83A / Min: 0A (100Vac-127Vac) Max: 150A / Min: 0A (200Vac-220Vac) Max: 165A / Min: 0A (220Vac-230Vac) Max: 166A / Min: 0A (230Vac-240Vac) |

| 12V SB | Max: 3.5A / Min: 0A |

| Output Type | Backplanes (gold finger) |

| Operating Environment | |

| Environmental Spec. | Operating Temperature: 10°C ~ 35°C (50°F ~ 95°F) Non-operating Temperature: -40°C to 60°C (-40°F to 140°F) Operating Relative Humidity: 8% to 90% (non-condensing) Non-operating Relative Humidity: 5% to 95% (non-condensing) |

The full turn-key data center solution accelerates time-to-delivery for mission-critical enterprise use cases, and eliminates the complexity of building a large cluster, which previously was achievable only through the intensive design tuning and time-consuming optimization of supercomputing.

Cloud-Scale Inference Datasheet

With 256 NVIDIA GH200 Grace Hopper Superchips, 1U MGX Systems in 9 Racks

Key Features

- Unified GPU and CPU memory for cloud-scale high volume, low-latency, and high batch size inference

- 1U Air-cooled NVIDIA MGX Systems in 9 Racks, 256 NVIDIA GH200 Grace Hopper Superchips in one scalable unit

- Up to 144GB of HBM3e + 480GB of LPDDR5X, enough capacity to fit a 70B+ parameter model in one node

- 400Gb/s InfiniBand or Ethernet non-blocking networking connected to spine-leaf network fabric

- Customizable AI data pipeline storage fabric with industry leading parallel file system options NVIDIA AI Enterprise software ready

Compute Node

Compute Node

Supermicro servers with the NVIDIA GH200 Grace Hopper Superchip

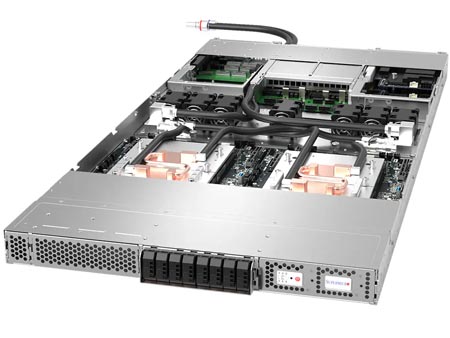

Construct new solutions for accelerated infrastructures enabling scientists and engineers to focus on solving the world’s most important problems with larger datasets, more complex models, and new generative AI workloads. Within the same 1U chassis, Supermicro’s dual NVIDIA GH200 Grace Hopper Superchip systems deliver the highest level of performance for any application on the CUDA platform with substantial speedups for Al workloads with high memory requirements. In addition to hosting up to 2 onboard H100 GPUs in 1U form factor, its modular bays enable full-size PCIe expansions for present and future of accelerated computing components, high-speed scale-out and clustering.

| SKU | ARS-221GL-NHIR | ARS-111GL-NHR | ARS-111GL-NHR-LCC | ARS-111GL-DNHR-LCC |

|  |  |  | |

| Form Factor | 2U Rackmount | 1U Rackmount | 1U Rackmount | 1U Rackmount |

| CPU | 72-core NVIDIA Grace CPU on GH200 Grace Hopper™ Superchip | 72-core NVIDIA Grace CPU on GH200 Grace Hopper™ Superchip | 72-core NVIDIA Grace CPU on GH200 Grace Hopper | 72-core NVIDIA Grace CPU on GH200 Grace Hopper™ Superchip |

| GPU |

|

|

|

|

| System Cooling | Air | Air | Air and liquid | Air and liquid |